PrimaVera Project

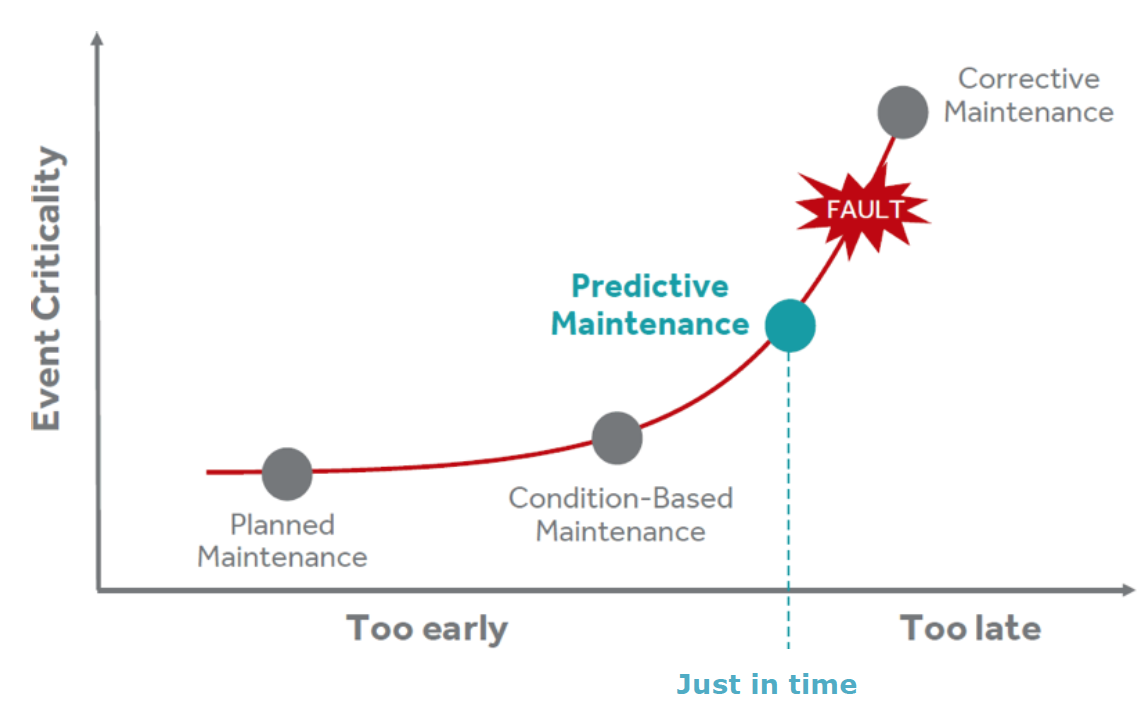

No more train delays, power outages, or failure of production machines? The PrimaVera project, funded by the Dutch National Research Agenda (NWA), represents a major step towards this goal. With predictive maintenance, or just-in-time maintenance (maintenance just before a system breaks down), the reliability of infrastructure and production resources can be increased and the costs of maintenance can be reduced.

Existing predictive maintenance techniques only work for small-scale systems and are difficult to scale up. Choices made in one place in the chain have an important influence on other processes in the chain. The choice of a certain type of sensors and measurements influences the type of predictions that can be made, and therefore also the quality of the predictions. That is why cross-level optimization methods are being developed within PrimaVera.

2024 |

| : Maintenance Strategies for Sewer Pipes with Multi-State Deterioration and Deep Reinforcement Learning. In: 8th European Conference of the Prognostics and Health Management Society 2024, PHME24, 2024. |

| : Fault Tree inference using Multi-Objective Evolutionary Algorithms and Confusion Matrix-based metrics. In: Formal Methods for Industrial Critical Systems (FMICS), Springer, 2024. |

| : Comparing Homogeneous and Inhomogeneous Time Markov Chains for Modeling Deterioration in Sewer Pipe Networks. In: 34th European Safety and Reliability Conference, ESREL 2024: Advances in Reliability, Safety and Security, 2024. |

| : A Comparison of Anomaly Detection Algorithms with Applications on Recoater Streaking in an Additive Manufacturing Process. In: Rapid Prototyping Journal, 2024, (Submitted). |

| : Fine Grained vs Coarse Grained Channel Quality Prediction: A 5G-RedCap Perspective for Industrial IoT Networks. In: 20th IEEE International Conference on Factory Communication Systems (WFCS 2024), 2024. |